Regulated industries fund it. Hyperscalers build it. Everyone runs it. A co-funded open source program delivering the complete POWER-class infrastructure stack — processor, management console, service processor, AI accelerator, and reference platform — at a fraction of today's proprietary system cost.

Regulated enterprises running POWER infrastructure need AI — but face a double lock-in: proprietary management and firmware stacks on their existing servers, and NVIDIA's closed ecosystem for any new AI workload. Hyperscalers and platform companies have spent billions building in-house silicon capability specifically to escape that same NVIDIA pricing trap. For the first time, their interests perfectly align. The ESI is the structure that lets both groups act on that alignment.

Deploying AI at scale on proprietary accelerator hardware carries per-unit costs and ecosystem lock-in that compound over time. An open inference accelerator designed and owned by its users breaks that pricing dynamic permanently.

DORA, NIS2, FFIEC, and emerging AI Act guidance increasingly require source-level visibility into critical infrastructure software. Proprietary management firmware and service processors executing below the OS cannot satisfy that requirement.

No open source alternative exists today for enterprise POWER management console or service processor firmware. The ESI builds both — under Linux Foundation governance, with multi-vendor hardware support.

Regulated industries have capital and urgent requirements. Hyperscalers have in-house silicon design teams that have already taped out production AI chips. The ESI pairs the two — industry funds, hyperscalers build, everyone owns the result.

The Power ISA is the open foundation. The ESI builds everything above it — so that regulated industries can run a fully auditable, competitively sourced POWER-class infrastructure stack from processor silicon to management console.

Each project has a discrete charter, deliverables, steering committee, and milestone timeline. Consortium members fund the portfolio as a whole; individual projects may also receive targeted contributions.

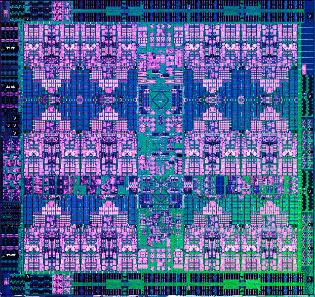

An open source POWER ISA processor core — based on the A2O out-of-order design — that any consortium member can synthesize, tape out at a commercial foundry, or deploy on FPGA. The silicon foundation beneath everything else.

Read CharterAn open source enterprise POWER management console. Manages logical partitions, live migration, power control, and hardware inventory across a POWER estate — with full source auditability, no appliance lock-in, and a documented REST API.

Read CharterAn open source service processor with enterprise-class capabilities — out-of-band management, hardware error logging, secure boot, and TPM-backed attestation — built on OpenBMC and housed in the OCP DC-SCM form factor.

Read CharterA complete server platform reference design built on OCP Data Center Modular Hardware System standards — integrating OpenCore, OpenFSP, and OpenHMC into a published, multi-vendor manufacturable hardware stack.

Read CharterAn open source AI inference SoC — 32 systolic-array AI cores coupled to a POWER ISA control processor via the AXU interface — targeting enterprise AI inference performance in a 75W PCIe card with full arithmetic auditability.

Read CharterTwo groups have arrived at the same problem from opposite directions. Regulated industries carry the cost of proprietary POWER infrastructure and face exploding AI inference bills from closed vendor ecosystems. Hyperscalers and platform companies have the silicon design expertise and the same motivation to break vendor-controlled AI hardware pricing. The ESI brings both groups together under OpenPOWER Foundation governance — a Linux Foundation project with established IP frameworks and IBM patent coverage for all POWER ISA implementations.

All participation includes standard OpenPOWER Foundation membership. All consortium outputs are released under OSI-approved open source licenses under OPF governance. Contact us to discuss terms tailored to your organization's contribution model.

Three phases over 36 months, from specification to production-ready reference platform. Founding members lock in roadmap influence during Phase 1.

If your organization runs POWER infrastructure and is paying too much for AI — or if your silicon team already knows what open inference hardware should cost — contact us for the full technical brief and consortium prospectus.